Explaining the world

one sketch at a time

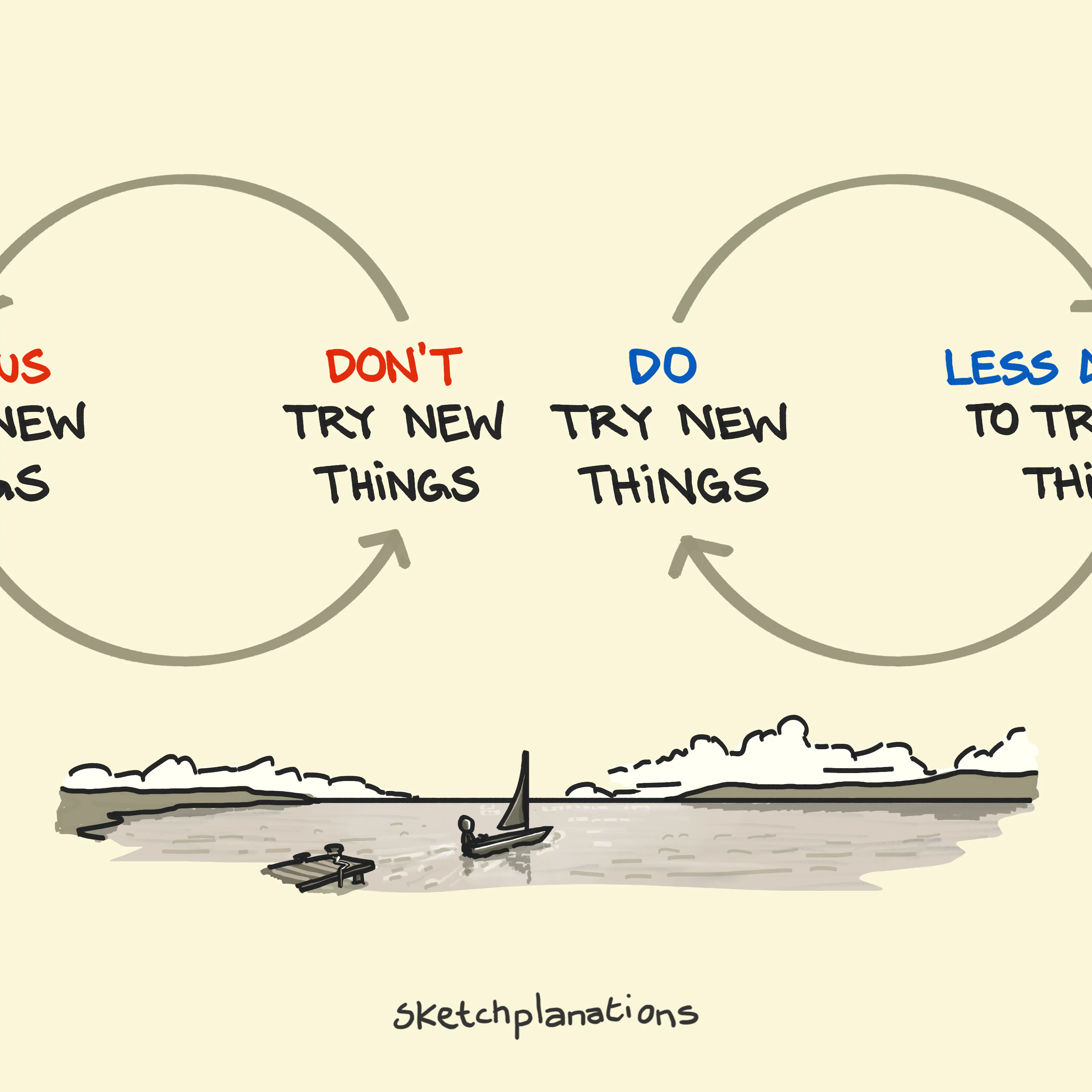

Have great conversations about ideas through simple and insightful sketches. Visual explanations that are fast to read, fun to share, and hard to forget.

Hi, I'm Jono 👋

I'm an author and illustrator creating one of the world's largest libraries of hand-drawn sketches explaining the world—sketch-by-sketch.

Sketchplanations have been shared millions of times and used in books, articles, classrooms, and more. Learn more about the project, search for a sketch you like, or browse by topic below.

Little Openings

Little Openings Understanding El Niño

Understanding El Niño Opening stubborn jars: an escalation of methods

Opening stubborn jars: an escalation of methods I will study and get ready and perhaps my chance will come — Abraham Lincoln

I will study and get ready and perhaps my chance will come — Abraham Lincoln Thermocline

Thermocline Recursive Islands

Recursive Islands Moravec's Paradox

Moravec's Paradox So Nothing Rhymes with Orange?

So Nothing Rhymes with Orange? The McNamara Fallacy

The McNamara Fallacy All models are wrong, but some are useful — George Box quote

All models are wrong, but some are useful — George Box quote The Iceberg Model

The Iceberg Model Uitwaaien: The Dutch Word for Walking in the Wind

Uitwaaien: The Dutch Word for Walking in the Wind Coffee: The Glorious Solution to The Coffee–Sleep Cycle

Coffee: The Glorious Solution to The Coffee–Sleep Cycle Pyrrhic Saving: "Saving" money by spending more

Pyrrhic Saving: "Saving" money by spending more The Volcanic Explosivity Index (VEI): Comparing Eruptions

The Volcanic Explosivity Index (VEI): Comparing Eruptions Mathematics in Everyday Life

Mathematics in Everyday Life When there's a gold rush, sell shovels

When there's a gold rush, sell shovels Front-load Key Words

Front-load Key Words Laplace's DemonView all

Laplace's DemonView allIn a Book: Big Ideas Little Pictures

5-star rated on Amazon!

Absorb big ideas with crystal-clear understanding through this collection of 135 visual explanations. Including 24 exclusive new sketches and enhanced versions of classic favourites, each page shares life-improving ideas through beautifully simple illustrations.

Perfect for curious minds and visual learners alike.

See inside the book